Welcome to Edition #53, wherein I stagger back to my keyboard from my brief hiatus only to find everything exactly where I left it, for better and for worse. “Just when I thought I was out…,” etc etc etc.

“See, now I’m scared.”

Choose Your Own A(I)dventure

We’ve spent a lot of time on algorithmic composition in various forms in the past months, including snippets on applications of generative media tools like GPT-3 that call into question the longevity of human writers like your humble scribe. Late last month, the New Yorker’s Stephen Marche added a solid piece to the canon of human writers writing about being replaced by robo-writers. It is always energizing to contemplate one’s inevitable redundancy.

Marche chronicles an experiment with a new GPT-3 powered product called Sudowrite. Its tagline? “Bust writer’s block and be more creative with our magical writing AI.” I suppose it is catchier than “collect unemployment and be more despondent because your job no longer exists.” As we always say: another promotion for the fine folks in Marketing.

Sudowrite functions as a user interface layer on top of GPT-3, such that a writer can feed inputs to the system and then “auto-generate” a complete text in a coherent style. The idea, in line with Sudowrite’s own marketing message, is that this enables a writer to focus on idea generation and tasteful editing rather than the horrible drudgery of actually writing. [Ed.: God forbid.] In a sense, one might be reminded of the composition process used by digital/visual artists like NFT multimillionaire Beeple, where they focus on creating concepts and selecting specific artistic elements from libraries of pre-generated content.

Marche continues to explore difficult questions of authorship: where does the human end and the machine begin? What might this mean for copyright and intellectual property in the future? And how are we to verify (human) originality for works in a world where tools like Sudowrite exist, regardless of whether in the academic realm or the literary world?

On a related note, it is worth considering whether using tools like Sudowrite to complete unfinished works by now-deceased authors is fair game; I am reminded of the questions I raised in prior Trillium issues about the holographic recreation of Tupac and other artists, and whether there was a difference in having those hologram reenact prior performances as opposed to generating net-new art.

For a related read, I recommend Pamela Mishkin’s intriguing GPT-3 powered longform piece: “Nothing Breaks Like A.I. Heart.” Mishkin’s article is really more of an interactive exhibit, wherein she sets the frame for a story and then, via GPT-3, generates a branching narrative that you can navigate as you see fit. Depending on the algorithmically-generated text that you select, the story will evolve and lead to new decision nodes. You can see the power of the underlying system, and the creative possibilities that it might unlock; you can also see the extent to which a narrative can become substantially influenced by non-human inputs.

The rest of Mishkin’s piece is a mixture of continued narrative and analysis of GPT-3’s strengths and shortcomings, along with the impact of its creative “partnership” on the writing process. It’s also fascinating to see the way in which there is a dialogue between author and algorithm, such that the “machine’s” response to the first human input might actually condition the second human input, and so forth. Choose Your Own Adventure, updated for the 21st century and powered by natural language processing.

Slowed soundtracks

My affection for slowed & reverb remixes is no secret, even if they’re now defined as a very “Gen Z” thing. [Ed.: I feel rather “vanished” by the assertion that slowed creators tend to be teens... Nonetheless, the in-house Trillium Therapist tells me that the feelings of resentment will pass in time.] I also continue to find it curious that many listeners define this genre as something that emerged in the 2017-2020 range, when pitch-shifted remixes have been a thing going back decades. DJ Screw, patron saint of the Trillium Automobile Sound System, is just one example of artist precursors to the anonymous remixers who have catalyzed the slowed subculture on YouTube, TikTok, and beyond.

Nonetheless, I’m a sucker for a good article capturing what’s going on in this world. For the latest, check out this CLASH feature on Gen Z remixers and slowed remixing as a transgressive act. Laura Molloy, the author, does a good job teasing out the audio traits (dreamy, reverb-drenched, codeine-paced) and visual complements (anime loops, pastel colors, melodrama) that characterize this genre. She also nicely summarizes the throughline from Screw to today, and the complicated politics of genre appropriation that exist here and in other corners of the music industry. Worth a read, particularly for the link to this Bee Gees remix (amongst others).

Re(mix)memories

Over the summer, I bookmarked this Input story about a YouTuber (Denis Shiryaev) who applied AI and visual processing techniques to “modernize” the visual look & feel of historical video footage. Then, because something like procuring KN95s—useful for California wildfires AND global pandemics—became a higher priority, I neglected to write about it. Better late than never.

Shiryaev’s work involves “upscaling” this footage and colorizing it so that it feels familiar to a 21st century viewer. In addition to expanding the palette and increasing the frame rate, Shiryaev leveraged AI models trained on extensive datasets of human faces to “enhance” those within the footage; the effect is noticeable and adds to the overall uncanniness of seeing something that we know is very old but which now looks quite recent. Of course, this technique, and the practice of re-colorization, introduces a fictional element to the project. Here’s an example of San Francisco’s market street, circa 1906, as we head towards the Ferry Building:

What are we to call this sort of media? It’s not really a deepfake, because the footage was captured in the real world and preserved for over a century. But, the alterations and algorithmic creative license (#ACL, trademark pending) make it something other than a pure historical record. It’s a hybrid artifact: one that uses the source material of the past and the technology of the present to produce a historical derivative of sorts. Ultimately, this work represents another form of the remix culture that has so defined the internet across genres, from music to memes and, now, to memories.

World Translations

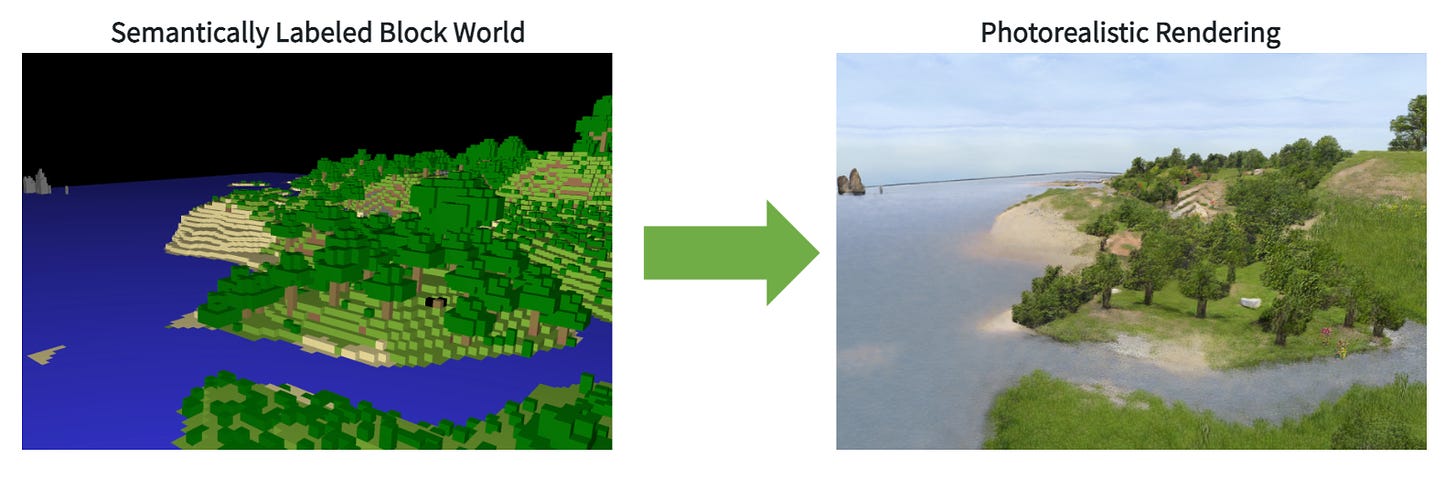

While we’re in the realm of visual world-building, check out this FlowingData piece on a joint NVIDIA/Cornell project called GANcraft. GANcraft is a pretty slick initiative that “aims at solving the world-to-world translation problem.” [Ed.: I did not know that this was a problem, but there you go. Problems abound these days.] Anyway, GANcraft takes “semantically labeled block worlds,” like the visual world inside of games like Minecraft, and converts them into photorealistic facsimiles with the exact same layouts.

Now look, you might say, isn’t the whole point of these games to pretend the real world doesn’t exist? And you would be right. But that doesn’t mean that it isn’t a cool innovation to be able to take a 3D blocks representing in-game geography and generate near-consistent “real-looking” environments that render appropriately from any viewpoint the user selects. In this case, the team working on GANcraft used unsupervised neural rendering frameworks. You could imagine a host of applications across gaming and mixed reality products. Early results are impressive:

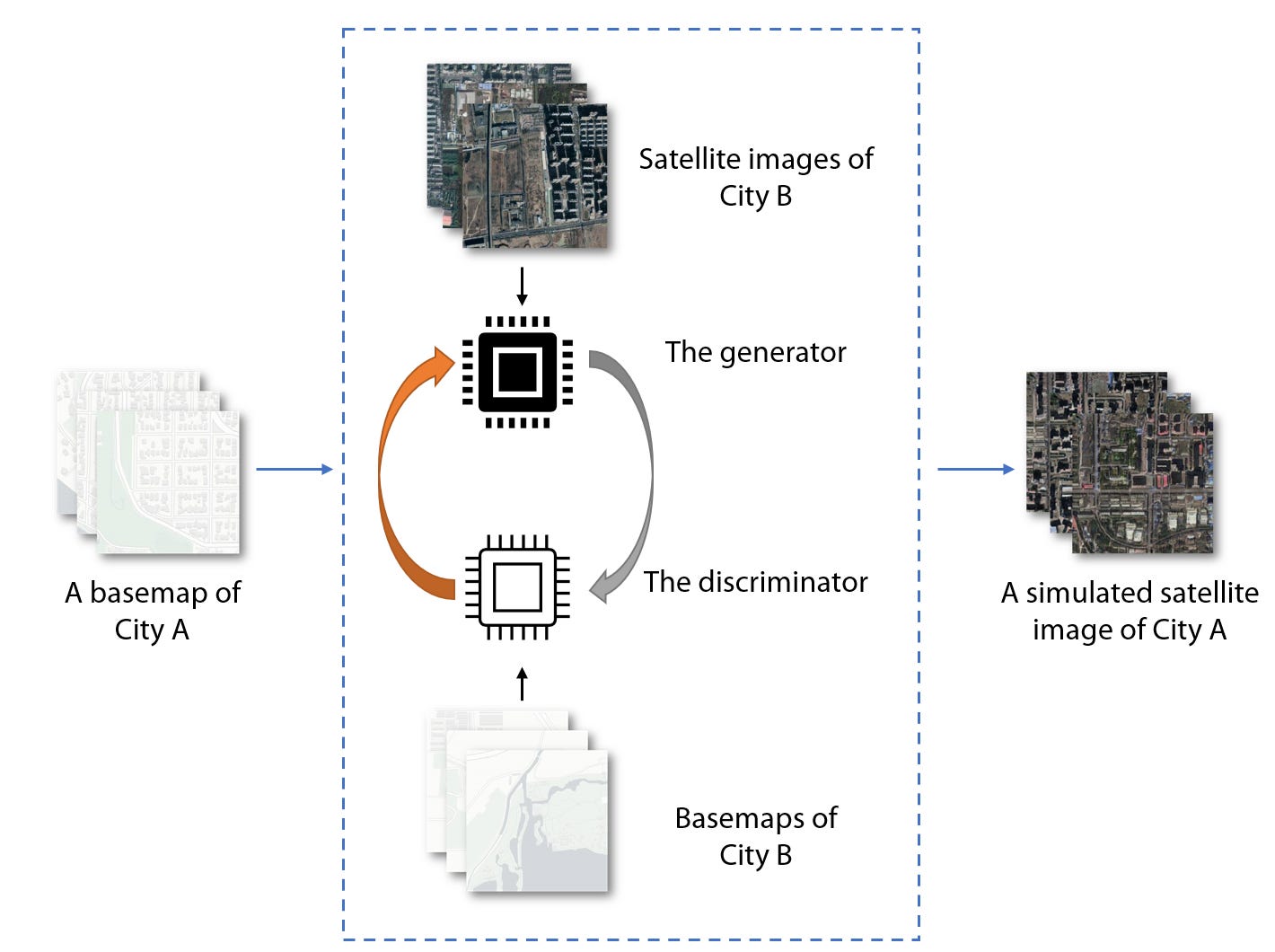

In a similar and perhaps more sinister vein is this TechCrunch article on deepfake satellite maps, which are produced by manipulating satellite imagery to generate “real-looking—but totally fake—overhead maps of cities.” All together now: what could go wrong?

This article was catalyzed by a University of Washington study authored by assistant professor Bo Zhao. Zhao and team focus on what they call “location spoofing” and “deepfake geography” where images of real places are doctored or outright falsified to mislead observers. Given the reliance on satellite data for a whole range of things—including intelligence gathering, military operations, and market/investment decisions—any sort of falsification could have significant implications across several sectors.

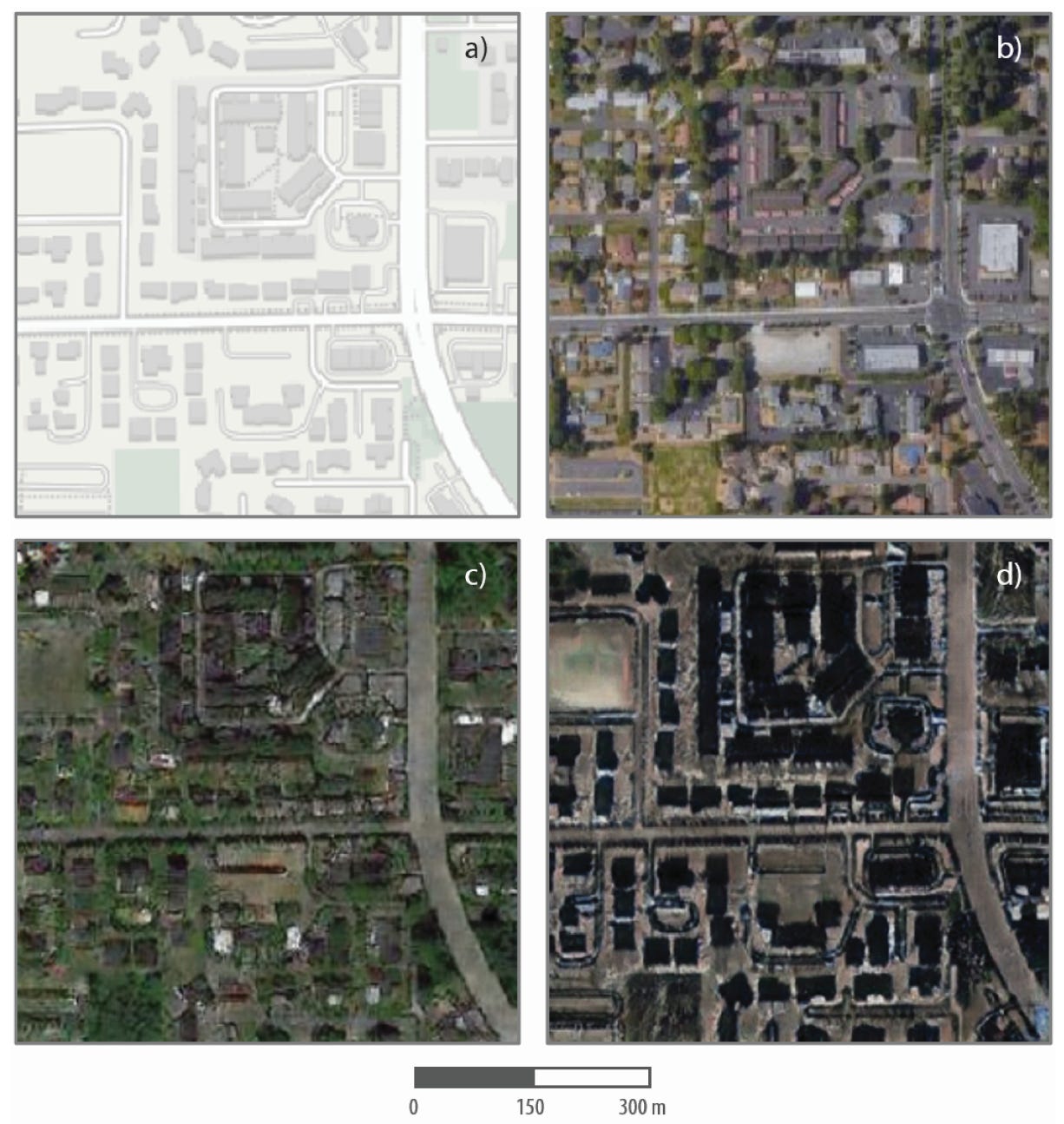

Zhao’s method was to use an AI framework that has been applied to the manipulation of other types of digital files. The way it works is that the algorithm learns the characteristics of an image—say, representing a certain city—and then adapts those features to a “different base map.” In practice, think about modeling Tacoma, WA and then projecting the features of Tacoma onto other city environments (e.g., Beijing).

Here’s a specific example of the same Tacoma neighborhood in map form (top left), satellite form (top right), and algorithmic translation form (bottom left/right). Bottom left shows a Tacoma-as-Seattle hybrid and bottom right shows Tacoma-as-Beijing.

There are plenty of interesting creative applications of this same method: converting old historical maps to satellite approximations, modeling land use proposals, hybrid world-building, and so forth. There’s also the very real possibility that the ability to alter satellite imagery—often perceived as a source of truth regarding what’s happening on the ground IRL—becomes another part of the deepfake media canon, alongside audio, visual, and other formats, and thus further complicates the concrete “knowability” of the world in which we live.

GTARadio

Many of you are, tragically, overly familiar with my long-standing affection for the Grand Theft Auto video games, and, in particular, their incredible in-game radio soundtracking. Since we’ve already hit a Godfather reference and multiple stories about generative media creation in this week’s edition, I figured it wouldn’t hurt to go for the Trillium Trifecta (trademark also pending) by adding a story about music and GTA.

GTA Radio is a very fun novelty project from Apperfect. Like my favorite projects, it is exactly what it sounds like. You select your favorite GTA installment (III, Vice City, IV, V, and San Andreas), choose from amongst that game’s classic radio stations, sit back, relax, and enjoy a trip down degenerate memory lane. I had not realized how much I missed listening to Wildstyle while driving through Vice City’s red light district. I know you do too.

For your ears only

Every year when Spotify does its wrap-up playlists, Jai Paul and his brother A.K. Paul rank amongst my most-listened-to artists. I’d estimate—scientifically speaking—that Jai Paul is in my top 5 list of “most played” going back to 2011-2012 when I first heard “Jasmine” and “BTSU (Edit).” Paul disappeared for a number of years, and has essentially stopped releasing new music. What music has surfaced has generally been in the form of unfinished (but still incredible) demos and leaked snippets.

A.K. Paul, meanwhile, has gone on to release a number of amazing tracks, including collaborations with artists like Nao and Mura Masa. I can’t resist posting my top 3 Jai/A.K. tracks below, real estate be damned.

A couple of weeks ago, Jai Paul briefly turned his website into a pixel-perfect reaction of the MySpace page that launched his music career. It’s now vanished from the Internet, because that is what happens when I try to take a week off. But, it was a cool way for Paul to drop some new music, including a snippet of a track called “Super Salamander.” Fans also discovered that there were two short BTSU” remixes hidden as .zip files in the website’s code. Because I occasionally/infrequently think ahead, I did rip those when I had the chance. Ping me if you want the files!

To be continued.

N